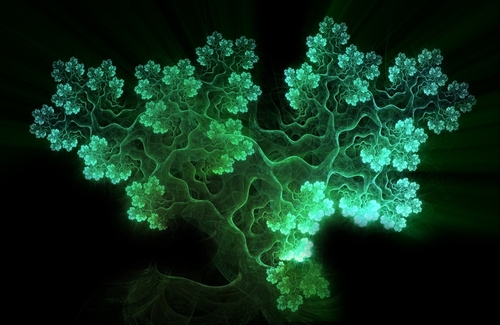

In a breakthrough that feels plucked straight from the world of Avatar, scientists have unveiled a new generation of bioluminescent plants—succulents that glow in vivid hues after absorbing sunlight. This development, led by researchers at South China Agricultural University, marks a significant leap in sustainable lighting and bioengineering.

Unlike previous attempts that relied on genetic modification using bioluminescent genes from fireflies or fungi, this new method uses “afterglow” phosphor particles. These particles, made from strontium aluminate and other metals, are injected into the leaves of succulents. Once exposed to light, they absorb energy and slowly release it over time, emitting a soft glow that can last for hours.

What sets this innovation apart is its simplicity and versatility. The particles are carefully sized—about 6 to 8 micrometers, roughly the width of a red blood cell—allowing them to diffuse efficiently through plant tissues while still producing a strong luminescent effect. The result? Succulents that shine in a rainbow of colors, including green, red, blue, and violet. Researchers even created a wall of 56 glowing plants bright enough to read by.

The implications of this technology are both practical and poetic.

Sustainable Lighting

Imagine replacing streetlights with glowing trees or using bioluminescent plants as ambient lighting in homes, offices, or public spaces. These living lights could reduce energy consumption and carbon emissions, especially in regions with abundant sunlight. Unlike traditional bulbs, they require no wiring, batteries, or electricity—just a dose of daylight.

Art and Design

Bioluminescent plants open new doors for landscape architecture and interior design. From glowing garden paths to radiant centerpieces, they offer a dynamic, eco-friendly alternative to artificial lighting. Their multicolored glow can be tailored to evoke specific moods or aesthetics, transforming spaces into immersive, living art installations.

Educational and Scientific Tools

These plants could serve as engaging tools in classrooms and labs, helping students visualize concepts like energy transfer, photosynthesis, and material science. Their glow also makes them useful for imaging and tracing in biological research, where visibility in low-light conditions is crucial.

Environmental Monitoring

Future iterations might integrate sensors that respond to environmental changes—glowing brighter in response to pollutants or temperature shifts. This could turn ordinary plants into bioindicators, offering real-time feedback on ecosystem health.

While the current method involves injecting particles manually, researchers are exploring ways to make the process scalable and even self-sustaining. The dream is to engineer plants that can absorb and emit light naturally, without external intervention.

As study lead Shuting Liu puts it, “Picture the world of Avatar, where glowing plants light up an entire ecosystem. We wanted to make that vision possible using materials we already work with in the lab”.

With this luminous leap forward, the line between nature and technology continues to blur—inviting us to imagine a future where our cities glow not with neon, but with life.